beyond anecdata

One of my favorite workplace neologisms is the term, “anecdata.” Depending on the day, it’s also my least favorite neologism. The word is a portmanteau of anecdote and data. It’s an observation that lacks perhaps the rigor of academia but that we know to be true anyway. In the working world, anecdata can be a classy name for a hunch. But hunches, outside of pro-police tv propaganda, are often wrong.

Many people learned about the scientific method in grade school. It usually goes something like this. Observe something, ask a question, do research, come up with a theory, test it, then make a conclusion. It’s important that you do those in order.

Say you start with a conclusion, “Half of our community speaks Spanish.” If you tried to test that conclusion, you might miss people who speak a language other than Spanish. This sometimes creates a phenomenon known as confirmation bias. If we start out believing we’re right, we may seek out or trust only information that confirms our belief. Try this instead: “I’ve noticed that people in our community don’t always know English. What languages might they feel most comfortable using here?”

why people like it

It’s not always easy to test our hypotheses. Formal evaluations tend to be difficult or tedious. We often need a researcher or evaluator to follow a formal data collection process. The harder the data is to collect, it may be even harder to interpret it. Consider these questions:

“Are people seeing our new media campaign?” You can measure the number of times people view your page, click on it or like it, or some other form of engagement. This is pretty easy.

“How do people feel about our new media campaign?” You could give people a survey to complete. You could present a simple multiple choice question, “I hate it / I’m fine with it / I like it.” What if your new campaign enrages or frustrates people? What if it makes them sad? How can you capture the full diaspora of human emotion? What if you left a space where people can share what they feel? It may take a lot of work to interpret and categorize these responses. What about a person who feels chartreuse, or scandalized, or tepid?

Most people at work don’t need a rigorous study to make conclusions, but they need more than a hunch.

why it’s not so great

I already talked about confirmation bias. Collecting anecdata without testing can confirm what you already know without proving it. Anecdata without testing can convince you that you’re on the right track when you’re not.

A researcher joined our organization as part of a national fellowship program. She worked with us for six months, researching and analyzing data that we could use for our programs. She gave us plenty to think about as we made policy and plans based on her work. She identified priorities that we thought were right but hadn’t confirmed. She did so with a level of detail that could have taken us years or a lucky break to figure out on our own.

putting the data in anecdata

I shared a form of the above with my excellent friend Emily. She’s an epidemiologist and future doctor of public health. And even more important (for me): she runs a tasty food blog called The Pie That Binds. Emily shared with me a few tools that could help someone bridge the gap between a guess and a research study.

Here are a few things that Emily shared with me, in order of complexity:

- The University of Wisconsin offers tools and forms that we could adapt to a small scoped project. She notes that they include short planning worksheets that may be useful.

- The University of Kansas offers a Community Checkbox that is closer to a paid evaluator. This one also costs money. The website says they help programs measure effectiveness and package the results.

- The Centers for Disease Control and Prevention has a free guide to program evaluation. It’s long and very detailed, but it’s accessible, comprehensive, and free.

- Another website I found is aptly named anecdata. It’s a free app by the MDI Biological Lab in Maine. Citizen scientists can create a research project and enlist others to help collect data. It seems like fun!

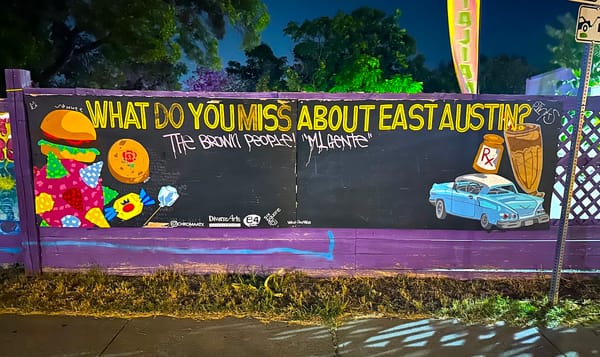

There’s also a line of formal inquiry that we rarely pose: questions about ourselves. What is our role here? What data have we already collected? How have we already put that knowledge into our existing work? A guide I love comes from Chicago Beyond, titled “Why Am I Always Being Researched?” Some communities get buried in focus groups, surveys, and studies. But the people who study them don’t do enough with what they’ve learned. People in power are often willing to conduct a study or reach out to historically excluded communities. They do so to say they’ve done it, then do what they wanted anyway. These efforts set back our progress towards real justice.

These considerations can lead to more. What do you already believe to be true? How can you test that? What would you do if your test revealed a different conclusion than the one you expect? More broadly, do you belong in this community or are you here uninvited? Who could you be taking up space from?

People often struggle to meet the needs of a community they don’t understand. Historically, this has given people in power the license to ignore them. But in reality, people shouldn’t have outsized power over others. We should be working with communities to help them co-create the solutions they need. If we can’t do that, we should start by transferring our power and wealth to the people who can.